2022–2023 · India AI Safety Initiative

India's first Alignment Research Fellowship

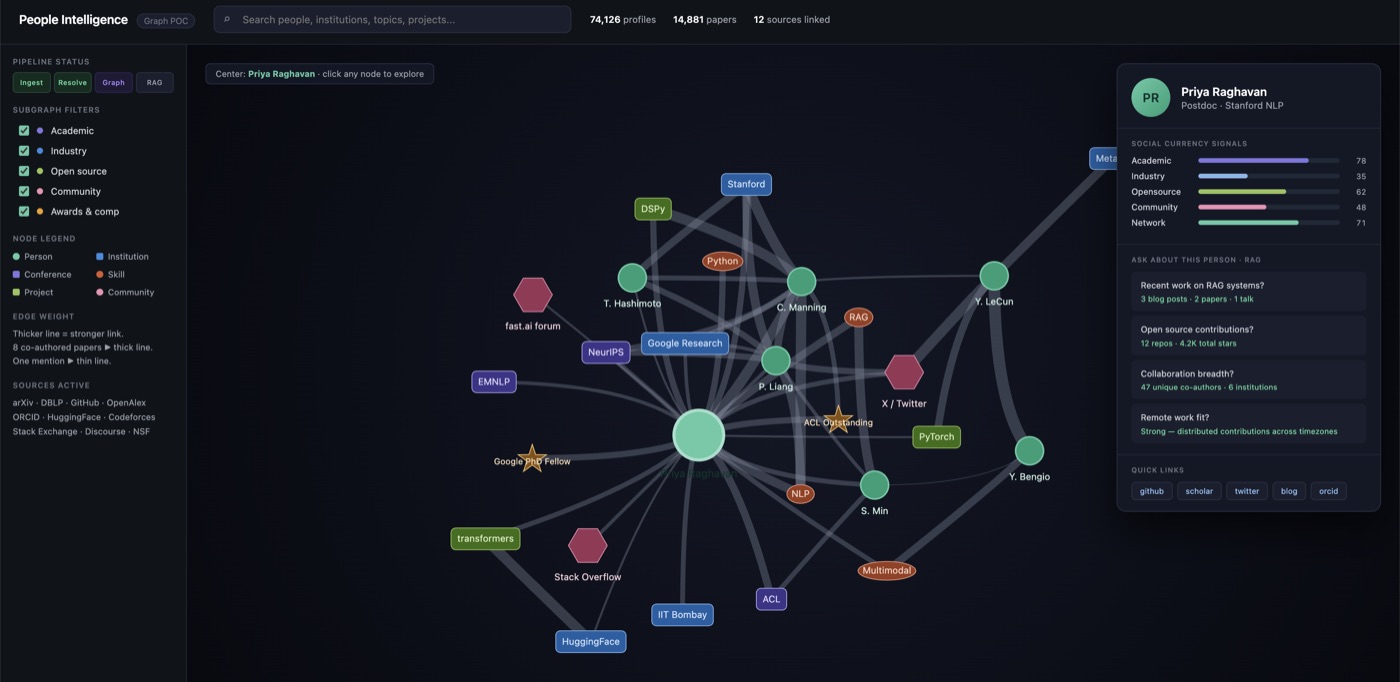

Founded under the India AI Safety Initiative on a $65K EAIF grant. India's first Alignment Research Fellowship attracted 600+ applicants across 40 STEM universities, of whom 24 (top 4%) were selected. 10 papers from that cohort are now published or under review.

The applicant pool itself was, in my view, the most informative output — a near-complete map of where serious AI safety interest sits in Indian academic institutions, and a useful indication of where the next wave of researchers is likely to come from.