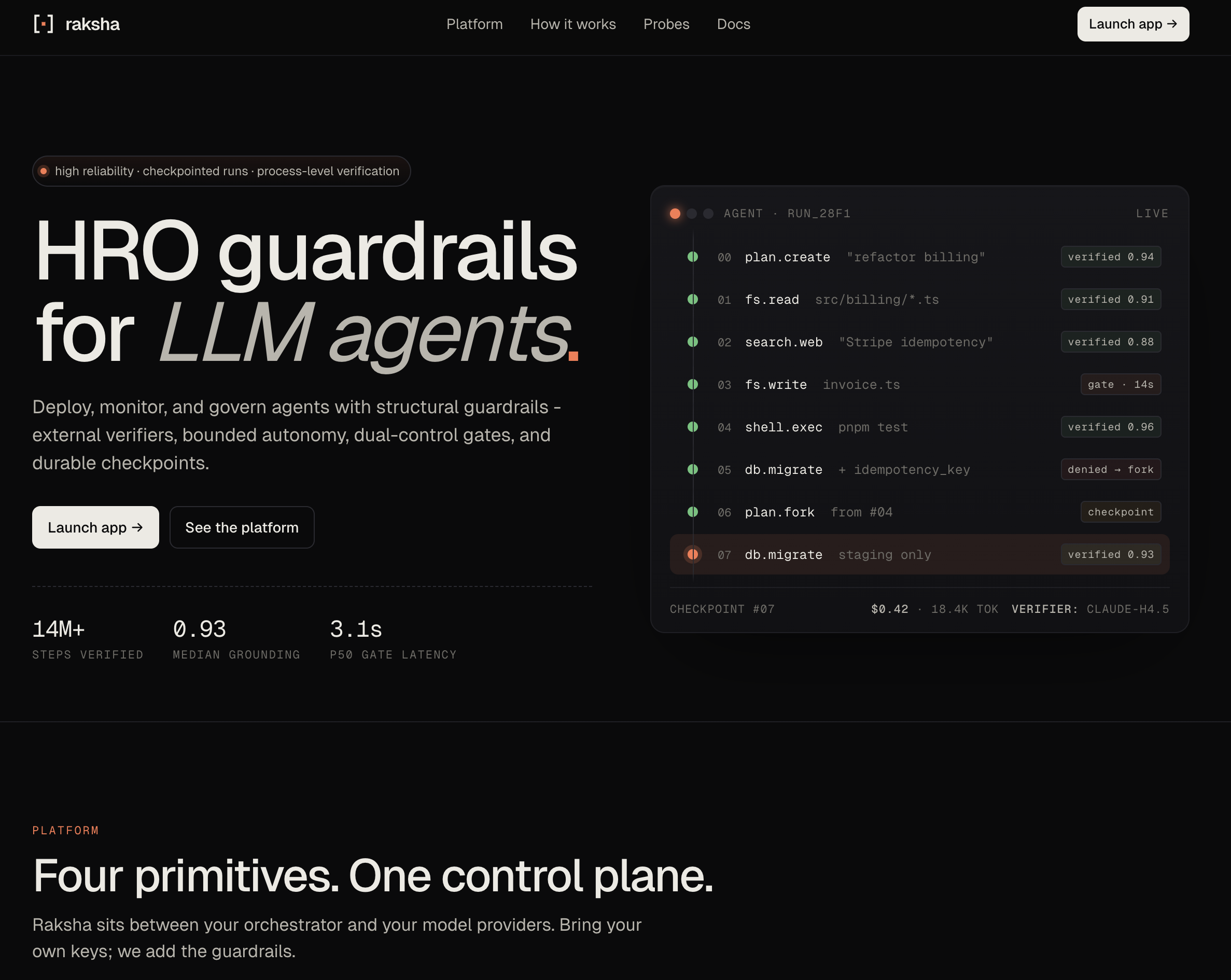

Founder · Jan 2026 · in pilot

Measuremint — AI-powered talent intelligence platform

A voice-first AI career agent for high-volume markets, currently in pilot in India with 2K–20K applicants per job. ElevenLabs and Claude for 10-minute AI-led candidate interviews, PostgreSQL for storage.

- Three-tier evaluation funnel — CV parsing → async voice challenge → full AI interview.

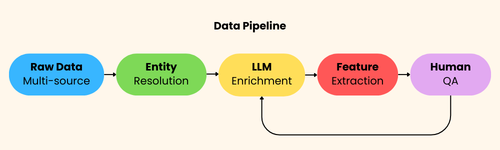

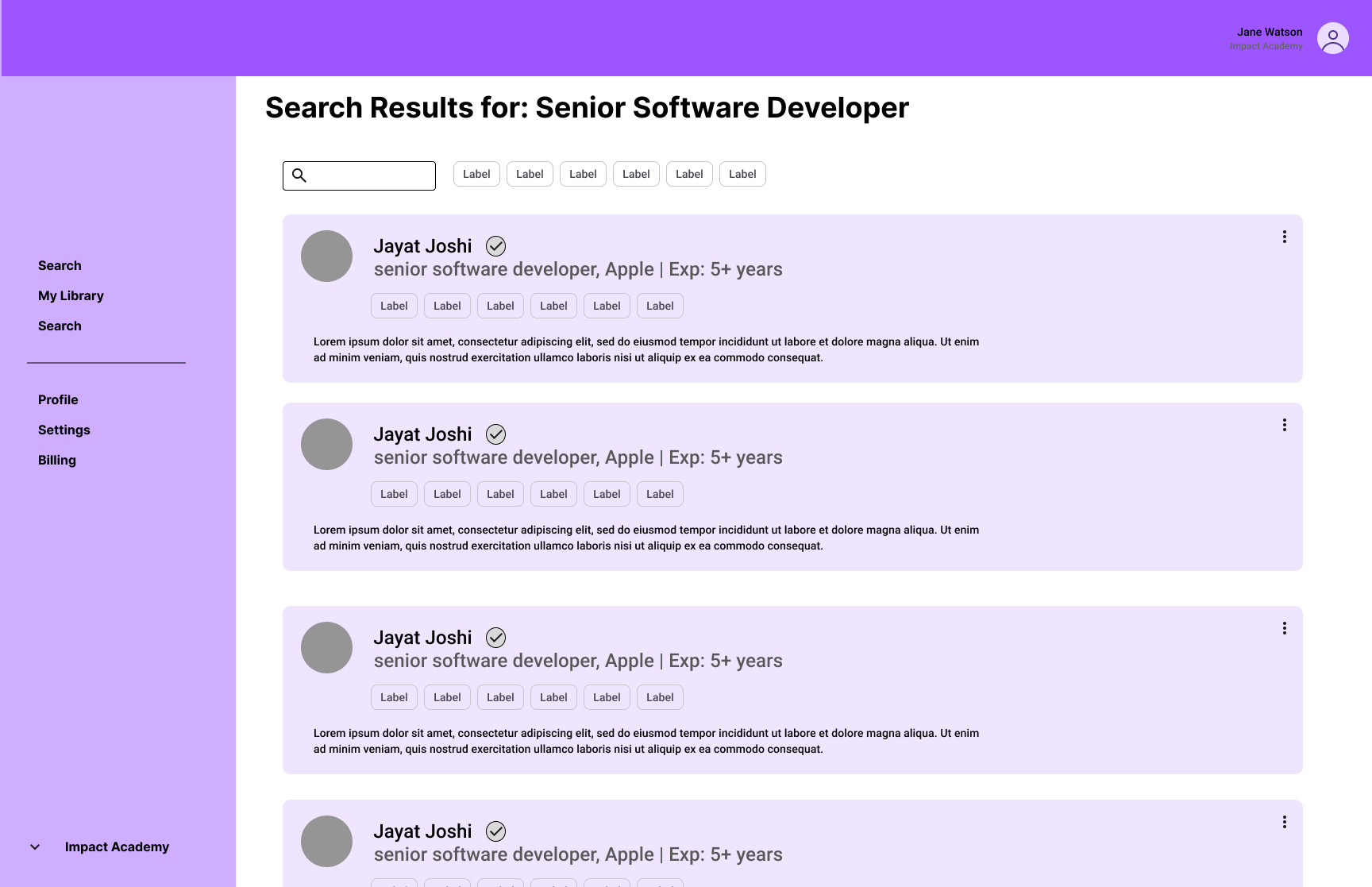

- Unified candidate pipeline (demo) — multi-source ingestion (Apollo, OpenReview, GitHub) with handle-based deduplication and a three-tier caching layer that cuts external API costs by 90%.

- Network Engine — a graph DB linking 100K+ STEM profiles across 500+ sources, with RAG-powered search and degree analysis to surface influencers, talent clusters, and research ties.

- Nexus — a cross-platform aggregation pipeline that monitors public layoff signals across Reddit, GitHub, and HackerNews, assembles deduplicated candidates weighted by reliability, and feeds leads into recruiting workflows.

The thesis: high-volume hiring in India and similar markets is where AI-native recruiting will land first, and the same infrastructure that supports talent-side experiments at SteadRise can be productised for the broader ML / SWE labour market — with safety, fairness, and candidate experience tested at scale before the model lands at frontier-lab scale.