Sept 2022, updated 2023

EAIF — AI Safety Field-Building in India

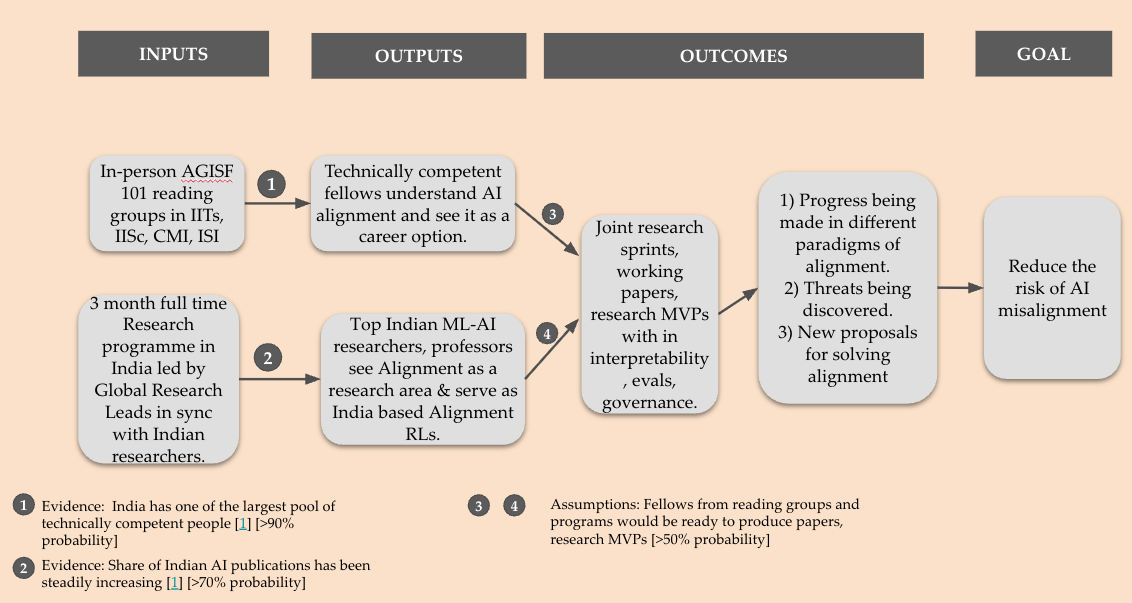

A four-pathway pilot proposal for longtermist field-building in India:

- Incubate high-fidelity, career-focused university groups (HAIST-style) across top Indian institutions, starting with an IIT-Delhi AGISF-based pilot.

- Partner with an existing ERI (CERI / CHERI) to stand up an Indian chapter.

- Build a global feeder pipeline with direct interview / screening fast-tracks into x-risk orgs.

- As a long-horizon bet, stand up an in-house J-PAL-style interdisciplinary x-risk research lab.

Built on conversations with 80+ students across 13 Indian institutions in 8 states, and shaped by feedback from senior fieldbuilders and grantmakers across Rethink Priorities, CEA, FAR.AI, Momentum, and Stanford. It became the strategic foundation for AISCF, GAISF, and SAFL.